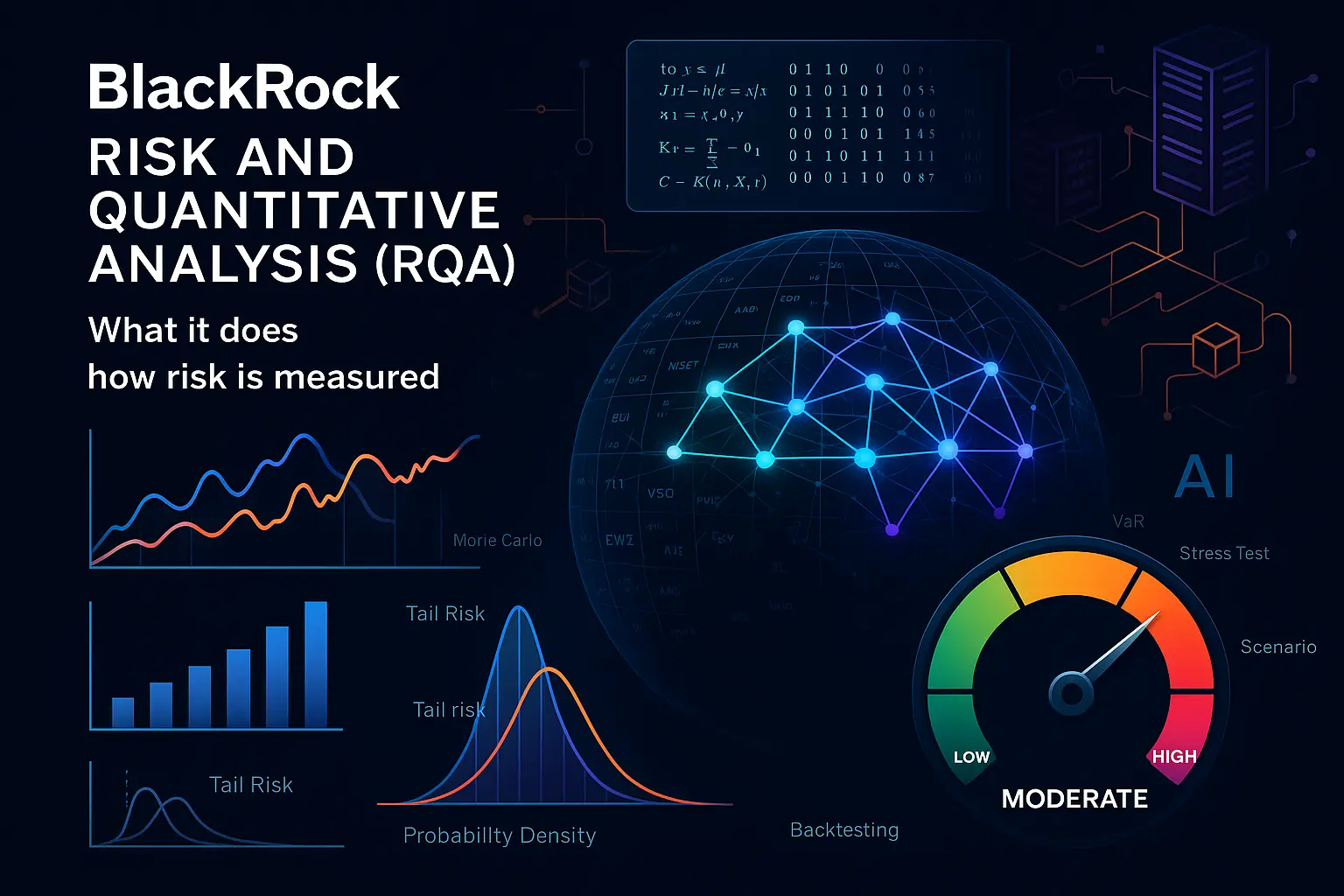

Risk and Quantitative Analysis (RQA) at BlackRock is the team that sits between markets and decisions — the people, models, and systems that translate market moves into clear answers: how much risk a portfolio has, what might break in a stress, and when to raise the alarm. This introduction explains why RQA matters for clients, investors and anyone curious about how modern portfolios are monitored, and it previews the practical stuff we’ll cover: what RQA does day-to-day, the math and scenarios that drive decisions, and where AI is already changing the job.

If you’ve wondered how big firms keep their portfolios from getting blindsided, RQA is the place to look. Think of it as three linked functions: measure exposures (what could move and by how much), set and enforce limits (what’s acceptable), and run what-if tests (how bad could it get). Those activities depend on platforms like Aladdin for daily risk runs, private-markets tooling for illiquid assets, and lots of data controls to make sure the numbers are trustworthy.

Today’s risk teams still rely on core tools — factor models, Value-at-Risk and Expected Shortfall, liquidity metrics, and scenario analysis — but AI is changing the workflow. From AI co-pilots that speed reporting and free up analysts’ time, to NLP systems that turn news and transcripts into early-warning signals, and anomaly detection that spots odd bets or liquidity gaps — the objective is the same: faster, clearer, and more auditable risk insight. We’ll also cover the governance questions this raises, because explainability, monitoring for model drift, and secure data controls are non-negotiable when models inform big investment choices.

What follows is a practical guide, not theory: a look inside RQA’s mandate and daily tasks, a hands-on tour of the measurement toolkit, and a clear-eyed view of how AI is being used and governed. If you’re a client who wants clearer transparency, an investor thinking about where active managers add value, or a candidate who wants to know which skills matter — keep reading. The next sections walk through:

- Inside RQA: mandate, daily work, and the tech stack.

- How risk is measured: the core math, scenarios, liquidity and counterparty frameworks.

- AI in risk: what’s already working, where it helps most, and how to govern it safely.

- Why it matters: for clients, investors, and candidates — practical takeaways and interview-ready tasks.

Ready to dive into the specifics? Let’s start with how RQA organizes its mandate and the day-to-day mechanics of keeping portfolios honest.

Inside RQA: mandate, day-to-day work, and the tech stack

Mandate and independence: investment, model, counterparty, and enterprise risk oversight

RQA’s core mandate is to be the independent guardian of risk across the firm: to assess and aggregate exposures, validate models, monitor counterparties and collateral, and ensure enterprise-level resilience. That independence is operational — RQA typically reports into a risk or chief risk officer function rather than into individual investment lines — so its findings and controls can influence portfolio decisions, limits, and escalation chains without conflicts of interest. In practice that means RQA defines risk policy, signs off on model deployments, owns approval criteria for new instruments or counterparties, and runs firm-wide stress and reverse-stress testing exercises used by senior management and governance committees.

Independence is reinforced by clear roles: investment teams run portfolio decisions and performance attribution; RQA challenges assumptions, tests model outputs, and enforces limits; a separate model governance function maintains documentation, backtests and sign-off records. Escalation paths are explicit so breaches, model failures, or severe scenario outcomes are rapidly routed to coverage committees, compliance, and where relevant, the board.

What an RQA analyst actually does: exposures, limits, VaR, stress tests, liquidity reviews, committee packs

On any given day an RQA analyst balances monitoring, analysis, and communication. Typical recurring tasks include:

– Daily exposure and P&L attribution reviews: reconciling trade feeds, checking factor attributions, and spotting drift versus targeted risk budgets.

– Limit monitoring and breach management: maintaining hard and soft limits, creating exception reports, triaging breaches and documenting remediation or escalation actions.

– Risk engine runs and model output checks: producing Value‑at‑Risk (VaR), expected shortfall, and scenario outputs; comparing live numbers to backtest windows and prior-day baselines.

– Designing and executing stress tests: defining shock scenarios, running portfolio re‑valuations, and translating results into actionable mitigations for portfolio managers.

– Liquidity and funding reviews: assessing time‑to‑liquidate assumptions, market impact, haircut schedules, and redemption stresses across funds.

– Counterparty and collateral reviews: mapping exposures across CSAs, evaluating margin calls, and flagging potential wrong‑way risk.

– Preparing committee packs and client/regulator materials: summarising results, drafting narratives that explain drivers and recommended actions, and providing audit-ready evidence for model governance and control checks.

Practical skills on the job are a mix of quantitative and operational: building and interpreting factor decompositions, constructing simple scenario P&L runs, scripting data transformations for daily pipelines, and converting numeric outputs into concise recommendations for committees and portfolio teams.

Platforms and data: Aladdin risk engine, eFront for private markets, data quality and controls

RQA teams use an ecosystem of specialist platforms and in-house tools to deliver consistent, auditable risk metrics. Front-to-back platforms handle trade capture and accounting; dedicated risk engines compute factor exposures, VaR, and scenario revaluations; and private markets systems provide valuation inputs and cashflow modelling for illiquid holdings.

Data quality and control is the connective tissue. Analysts spend significant time on ingest pipelines, normalization, and reconciliation: confirming that trade records, market prices, reference data and corporate actions align across systems. This work covers automated checks (schema, range, missingness), daily reconciliation reports, lineage metadata for each input, and exception workflows so human review is focused where it matters.

Typical tech-stack components you will see in a modern RQA environment include:

– Risk engine(s) for factor and scenario computation integrated with portfolio accounting outputs;

– Private markets and alternative asset systems for valuations and cashflow modelling;

– A data lake/warehouse and time-series stores for historical risk, market and factor data;

– Orchestration and scheduling (batch and near‑real‑time) to ensure timely runs and alerts;

– Scripting and analytics tools (Python, R, SQL) used for ad‑hoc analysis, model development, and automation of repetitive tasks;

– CI/CD and model governance platforms to version models, track tests, and maintain documentation and sign-offs;

– Monitoring, logging and audit trails so every run, data change, and report is reproducible for internal and external review.

Controls are layered: automated validation gates prevent invalid inputs from reaching the risk engine, pre‑production environments catch model changes, and reconciliation reports link accounting positions to risk outputs. The technical environment is therefore as much about reducing manual error and achieving reproducibility as it is about raw compute power.

With the mandate, daily workflows, and technology foundation laid out, the obvious next step is to look under the hood at how those systems and processes actually quantify and stress risks — the math, scenarios, and liquidity assumptions that drive decision-making across portfolios.

How risk is measured in practice: the core toolkit that drives decisions

Market risk math: factor models, volatility, Value-at-Risk and Expected Shortfall

At the center of daily risk measurement are factor-based systems and distributional metrics that translate positions into concentrations and loss estimates. Factor models map instruments to a set of common drivers (rates, equity indices, FX, credit spreads, commodities) so that exposure is decomposed into explainable buckets rather than thousands of individual securities. That decomposition supports concentration limits, attribution and hedging decisions.

Volatility and correlation assumptions feed the aggregation step. Risk engines use historical or implied volatilities plus correlations across factors to convert exposures into portfolio-level measures. Two widely used summary metrics are Value‑at‑Risk (VaR), which estimates a percentile loss over a given horizon and confidence level, and Expected Shortfall (ES), which reports the average loss beyond that percentile. Practically, teams run both: VaR for daily monitoring and backtesting, and ES for a more conservative view of tail risk.

Model risk controls are key: backtests against realized P&L, sensitivity checks to factor choice and lookback window, and reconciliation between risk engine outputs and P&L explainers. Simple, replicable checks (one‑factor shocks, single-day replays) coexist with full Monte Carlo or historical-simulation runs to stress model assumptions.

Scenarios that matter now: rate shocks, spread widening, equity drawdowns, commodity spikes, geopolitics

Scenario analysis complements distributional metrics by asking practical “what if” questions. Teams maintain a library of canonical shocks (large rate moves, sovereign or corporate spread widening, sector-specific equity drawdowns) and also build ad‑hoc scenarios tied to real events — central bank surprises, trade disruptions, or geopolitical flare-ups.

Good scenario design blends plausibility and severity: some scenarios mirror historical episodes (2008, 2020, regional crises) while others are hypothetical combinations (rates up + credit spreads widening + FX stress). Results are translated to actionable outputs: required hedging, rebalancing, liquidity cushions, or communication to investors and governance committees.

Liquidity and funding: time-to-liquidate, market impact, swing pricing, redemption modeling

Liquidity risk measurement is about translating mark‑to‑market losses into realized outcomes when positions must be sold. Common practical inputs include time‑to‑liquidate (how long to unwind a position without unacceptable market impact), estimated market impact per unit traded, and haircut schedules for collateral valuation.

For pooled products, liquidity models also consider redemption behaviour and swing‑pricing mechanics that shift dilution costs back to redeeming investors. Redemption modelling often combines historical flow analysis with scenario-driven increases in outflows, producing run‑rate stress results used to set liquidity buffers and gating thresholds.

Funding risk ties to margining and short-term financing. Stress runs examine forced deleveraging paths: margin calls, widening haircuts, and the interaction between market moves and funding liquidity are translated into potential forced sales and liquidity shortfalls.

Counterparty and collateral: CSA terms, wrong-way risk, clearing/OTC exposure mapping

Counterparty exposure measurement is both contractual and market-driven. Analysts map trades to CSA/ISDA terms to identify netting sets, eligible collateral, margin frequency and thresholds. Those legal terms determine how much exposure is reduced in normal and stressed states.

Wrong‑way risk — where exposure increases as the counterparty’s credit quality deteriorates or as market moves are correlated with counterparty stress — is flagged explicitly. Measurement combines exposure profiles under stressed scenarios with counterparty credit indicators to surface combinations that warrant limits or additional collateralization.

Cleared vs OTC distinction matters operationally: cleared exposures have standardized margining but can concentrate short‑term funding risk, while bilateral OTC with robust CSAs may still leave residual gap risk if collateral types or thresholds are unfavourable.

Limits and escalation: hard/soft limits, dashboards, breach workflows

Limits translate risk measurements into governance actions. Hard limits are non‑negotiable thresholds that trigger immediate escalation and often forced remediation steps; soft limits provide early‑warning thresholds prompting reviews and potential rebalancing. Limits are typically set by risk type (factor concentration, VaR/ES, liquidity ratio, counterparty exposure) and by granularity (portfolio, strategy, desk, legal entity).

Dashboards are the operational nerve center: automated feeds show current metrics, trend lines, limit status, and exception lists. Breach workflows must be pre‑defined — who owns the remediation, required documentation, timing for committee notification, and any interim mitigations (hedges, position freezes, or liquidity buffers). Auditability is essential: every breach, decision and follow‑up is logged to support governance and regulatory reviews.

Together, these tools — factor models and tail metrics, scenario libraries, liquidity/funding frameworks, counterparty mapping, and disciplined limit processes — form a practical, reproducible toolkit that turns market data and positions into governance-grade decisions. With that quantitative foundation in place, the next natural question is how automation and advanced analytics are changing the speed, scale and audibility of these workflows and the controls around them.

AI in risk and quantitative analysis: what’s working and how to govern it

Risk co-pilots and automation: faster reporting, cleaner controls, 10–15 hours/week saved per analyst

AI co‑pilots and workflow automation are delivering concrete productivity gains in risk teams by taking over repetitive reporting, collation of evidence for controls, and first‑pass anomaly screening. That frees analysts to focus on judgement‑heavy tasks — scenario design, escalation decisions, and model criticism — rather than routine data assembly and formatting.

One finding from industry research captures the practical gain: “10-15 hours saved per week by financial advisors (Joyce Moullakis).” Investment Services Industry Challenges & AI-Powered Solutions — D-LAB research

Governance for co‑pilots is straightforward in principle: (1) limit them to assistive roles (drafts, summarisation, templating), (2) require human sign‑off on all control and client outputs, and (3) instrument usage with audit logs so every automated action is reproducible and reviewable.

NLP for early-warning signals: turning news, transcripts, and geopolitics into portfolio scenarios

Natural language models are now effective at converting high‑volume unstructured inputs — newsfeeds, earnings calls, analyst transcripts, and policy announcements — into structured signals that feed scenario generation and monitoring. Rather than replacing macro teams, NLP accelerates signal triage and surfaces candidate scenarios for human validation.

As a headline from recent industry work puts it: “90% boost in information processing efficiency (Samuel Shen).” Investment Services Industry Challenges & AI-Powered Solutions — D-LAB research

Operationally this looks like automated event tagging, entity extraction for exposures (issuers, sectors, regions), and scripted scenario drafts that risk teams then refine. Effective governance requires provenance tracking (which source led to the signal), confidence scoring, and periodic calibration against human‑curated event lists so drift and false positives are controlled.

Anomaly detection: spotting outlier factor bets, liquidity gaps, and unusual flow patterns in minutes

Unsupervised and supervised ML models are proving valuable for near‑real‑time anomaly detection: identifying sudden factor concentration shifts, unusual trading flows, or liquidity deterioration before they show up in P&L. Typical implementations combine streaming position and trade feeds with feature engineering (turnover, bid‑ask widening, concentrated inflows) and alert thresholds that trigger analyst review.

To govern anomaly systems, teams must define alert precision/recall targets, label known edge cases, and maintain a human-in-the-loop review queue. Alerts should be ranked by plausibility and impact so scarce analyst time is focused where it matters.

GenAI for client and regulator-ready narratives: speed with reviewable, auditable outputs

Generative models accelerate routine narrative production — committee packs, risk commentary, and client letters — by turning analytics outputs into readable prose. The value is speed: faster delivery of consistent narratives and easier tailoring to different audiences (portfolio managers, clients, compliance).

Controls are essential: every GenAI draft must be tagged as machine‑generated, include the data snapshot used to create it, and require explicit editorial approval. Versioning and a change log (who edited what and why) turn a fast draft into an auditable artifact acceptable for regulatory review.

Model risk for AI: explainability, drift monitoring, documentation, and human-in-the-loop

Applying ML in risk expands traditional model‑risk practice rather than replacing it. Key governance pillars are explainability (feature importance, SHAP or LIME summaries), continuous performance and drift monitoring (input distribution shifts, target degradation), thorough documentation (data lineage, training process, hyperparameters) and mandatory human oversight for decisions with material impact.

Regulators expect model inventories, backtest evidence, and stress scenarios for new ML models. Practical risk teams implement staged rollouts (shadow mode → pilot → production), automated checks before promotion, and “kill switches” to immediately revert to deterministic processes if anomalies appear.

Security first: NIST 2.0, ISO 27002, SOC 2 to protect IP, data, and trust

Security and privacy are non‑negotiable with AI: training data, model weights and inference logs are valuable intellectual property and sensitive client material. This requires rigorous cybersecurity due diligence. Standards such as NIST, ISO 27002 and SOC 2 provide maturity frameworks that risk teams use to set controls for access, encryption, incident response, and supplier assessment.

Practically this means segregating production and development environments, encrypting data at rest and in transit, enforcing least‑privilege access to models and datasets, and requiring third‑party AI vendors to demonstrate compliance evidence before approval.

Taken together, these use cases show how AI is already shifting the daily rhythm of RQA — from manual collation to high‑value oversight — but they also illustrate that robust governance, explainability and auditability are prerequisites for adoption. With governance in place, teams can safely scale AI assistance and focus human capital on the judgment calls that machines cannot make. The natural next step is to translate these shifts into the client and talent implications that follow.

Thank you for reading Diligize’s blog!

Are you looking for strategic advise?

Subscribe to our newsletter!

Why this matters for clients and candidates

For clients: clearer risk transparency, quicker scenario responses, and more resilient portfolios

Clients today expect two things from large asset managers: clear, explainable risk information and timely responses when markets shift. RQA delivers both by turning raw positions and market data into standardised metrics, scenario outputs, and concise narratives that are understandable to non‑quantitative stakeholders. That transparency helps clients evaluate tradeoffs — e.g., concentration vs expected return, liquidity buffers, or the cost of a bespoke hedge — and gives them confidence that shocks will be assessed and communicated quickly.

Beyond reporting, the real client benefit is operational: faster scenario runs and automated alerts enable quicker remedial action (rebalancing, targeted hedges, or liquidity provisioning) so portfolios are better positioned to absorb stress without costly knee‑jerk moves.

For investors: fee pressure, passive flows, and dispersion—why risk discipline is the edge in active

Active managers face structural headwinds that compress margins and raise the bar for performance. In that environment, disciplined risk management becomes a differentiator: it prevents outsized losses, preserves capacity to exploit market dislocations, and supports repeatable process execution. Investors prize managers who can demonstrate both upside capture and downside protection because consistent risk control reduces drawdown risk and increases the odds of long‑term outperformance.

Put simply, risk analytics are not just compliance: they are a source of strategic edge for investment teams that can translate quantitative insight into steadier performance and clearer client outcomes.

For candidates: Python/SQL, time-series, stress design, liquidity metrics, and clear storytelling

For people entering or moving within RQA, the role blends quantitative technique with operational fluency and communication. Employers typically look for candidates who can: manipulate time‑series data (SQL, Python/pandas), implement and interpret factor decompositions, design stress scenarios and liquidity tests, and build repeatable analytics pipelines.

Equally important is the ability to translate technical outputs into concise recommendations: committees and portfolio managers want clear conclusions, not raw dumps. The best candidates combine coding and math with disciplined documentation and presentation skills.

Interview-ready: build a simple scenario set, decompose factor risk, and articulate portfolio impact

To be interview‑ready for an RQA role, prepare three short, demonstrable pieces of work: (1) a small scenario set (e.g., parallel rate shift, credit spread widening, equity drawdown) with P&L revaluations across a handful of positions; (2) a factor‑risk decomposition for a sample portfolio showing contribution to volatility and concentration; and (3) a one‑page memo that distils the findings into actionable recommendations (hedge, reduce exposure, increase liquidity buffer) and the reasoning behind them.

These exercises show not only technical competence but also judgement and the ability to prioritise — the core skills that make a candidate valuable on day one.

Taken together, the points above show why robust risk analytics matter: they improve client outcomes, create a competitive advantage for active management, and define the practical skillset hiring teams prize. If you want to get practical fast, focus your next steps on delivering reproducible scenarios, mastering simple factor tools, and practising the concise storytelling that turns numbers into decisions.